Verified Lists vs Scraping in GSA SER Explained

GSA SER Verified Lists Vs Scraping

The Foundation of Link Building Efficiency

Every serious user of GSA Search Engine Ranker eventually faces a critical operational decision. The software is a powerhouse, but its output is only as good as the targets you feed it. This brings us to SER verified list provider the unavoidable debate around GSA SER verified lists vs scraping. Choosing the wrong approach doesn't just waste time; it burns through captcha credits, proxies, and ultimately, your ranking potential. Understanding the mechanics of each method is the first step toward building a campaign that actually moves the needle.

The Mechanics of Harvesting Fresh Targets

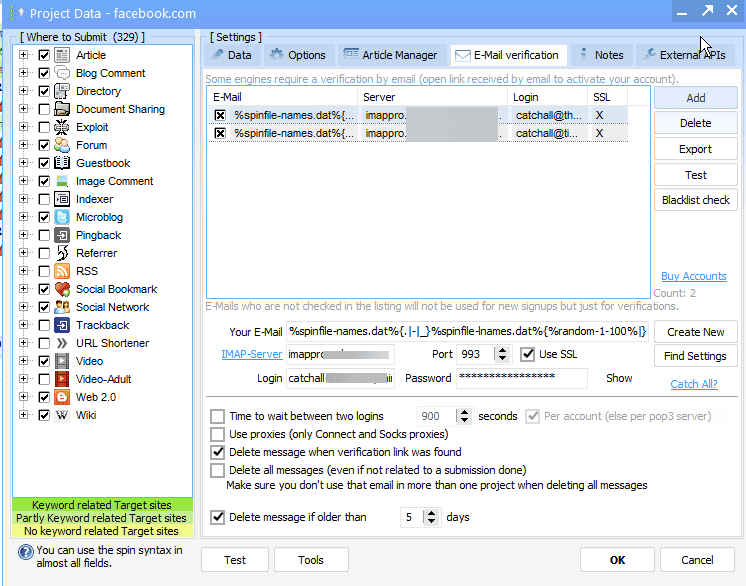

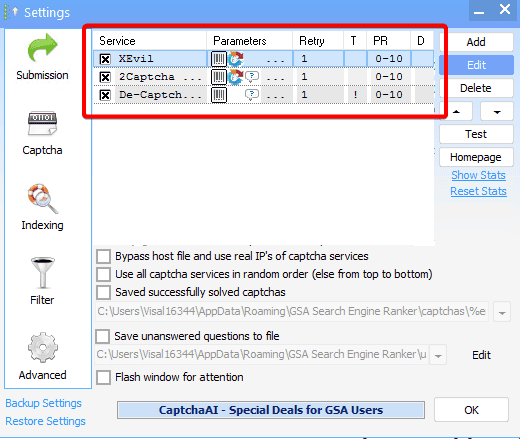

Scraping is the native, built-in soul of GSA SER. The software scours search engines like Google, Bing, and Yahoo based on your chosen keywords and footprints. It then applies platform-specific engines to identify where it can post. This process is dynamic and theoretically limitless. The primary argument for scraping is relevance. You are hunting for targets that exist right now, indexed for specific keywords, and directly related to your niche. The contextual connection between a scraped target and your money site can be incredibly tight, which is what modern search algorithms allegedly reward. The downside is raw resource consumption. A heavy scraping session will devour proxies and captcha solves at an alarming rate, often returning a high percentage of dead, duplicate, or already-spammed platforms.

Understanding Pre-Verified Target Lists

A verified list is a static database. Someone else—or a specialized service—has already run the scraping engines, attempted sign-ups, and confirmed that a URL accepts submissions. These lists are categorized by platform type: blog comments, trackbacks, social bookmarks, wikis, and article directories. When you load a verified list, you skip the discovery phase entirely. GSA SER jumps straight into the submission routine. This preserves your proxies and captcha budget because you are not paying to identify targets, only to post to them. The immediate gain is speed. You can achieve a massive blast volume in minutes. The hidden cost is staleness. A verified list begins to degrade the moment it is created. Platforms get banned, domains expire, and comment sections close. A list that was 90% live last month might be 40% live today.

GSA SER Verified Lists vs Scraping: The Showdown on Success Rates

The true battleground for GSA SER verified lists vs scraping is the verified submission rate. When you scrape, your global success rate—including the failures from dead search results and unidentifiable platforms—might sit at a grim 5-10%. However, the links you do land are often on diverse, unburnt domains. With a premium verified list, your success rate inside the software might show 60-80%. This looks fantastic on the dashboard, but it comes with a trap. High success rates on a static list usually mean you are posting to the same platforms thousands of other users are hitting. This creates massive link graphs consisting entirely of "neighborhood" spam. While you got 10,000 verified links, 9,000 of them might appear on domains shared with malware, pharma spam, or worse.

Resource Allocation and Hidden Costs

The financial logic is often distorted by short-term thinking. Scraping feels expensive because you see the captcha bill rising in real-time. Yet, a high-quality verified list isn't free; a good monthly subscription can match the cost of several million captcha solves. Furthermore, if you use a verified list and fail to properly filter it, you will post links to penalized domains that actively hurt your trust score. The cleanup cost later, in terms of wasted link removals or a Google penalty, dwarfs the initial outlay. Smart operators often realize that the proxy wear-and-tear from scraping is actually an investment in footprint diversity, a variable you surrender entirely when relying on a list compiled by a third party who uses generic footprints.

Building a Hybrid Engine for Sustainable Rankings

The most durable strategy isn't choosing one side. It’s layering them. Use verified lists strictly for the "chaff" links that pad your referring domain count on mass platforms like guestbooks or image comments, where quality is a non-factor. Run these campaigns on cheap proxies to buffer the anchor text diversity. Simultaneously, let a tightly configured scraping project run on premium private proxies. Point this scraping at the difficult platforms: contextual article directories, high-DOFLLOW forums, and niche-related blogs. This ensures your Tier 1 or high-quality Tier 2 links are coming from fresh, unspammed sources that share a topical relationship with your niche. The scraping side feeds your real ranking power, while the verified list side provides the sheer volume of nofollow and low-power links that make a backlink profile look natural.

When the Algorithm Sniffs Out Uniformity

Search engines are not just looking at your domain; they are looking at your link velocity and source diversity. A blast of 20,000 verified links triggers a footprint. The software might identify the same custom pages, the same registration usernames, and the identical server IPs rapidly linking to you. This is a signature. Scraping, in contrast, introduces organic chaos. The platforms are discovered through varying keyword sequences, leading to a wider range of Class-C IPs and Domain Age distributions. The best defense against algorithmic filtering is this unpredictability. A campaign built solely on verified lists looks like a mechanical printing press, while a scraped campaign mimics the uneven distribution of a viral piece of content.

Final Verdict on Operational Strategy

Stop looking at GSA SER verified lists vs scraping as a binary choice. The software was designed to scrape; the verified list market emerged to solve the problem of lazy configuration. If your hardware, proxy pool, and captcha budget allow it, you should always be scraping your core tier. A dedicated server with 50 threads of targeted scraping will over time build an internal database far more valuable than any mass-market list. Keep a verified list on standby for those moments when you need to pump out a rapid social signal burst or when your target URLs require a sheer volume of nofollow foundation links. The real answer to the debate is that a list cannot think, and an algorithm cannot stop adapting. Merge the raw efficiency of verified data with the adaptive intelligence of live scraping, and you finally align your tool with the way search engines actually evaluate trust.